The biggest risk with AI if you work in Finance is not that it replaces your job.

It’s that the business stops coming to finance – and your entire department is downsized or replaced.

For decades, finance has been the place people go when they need to understand the financial consequences of a decision. Should we drop price? Can we hire more staff? What is this expense doing in my cost centre?

Historically, those questions flowed naturally toward the finance team because the information and expertise largely sat there.

AI is changing that.

Today, anyone in an organisation can open ChatGPT, type a question about pricing, margins, inventory or financial impacts, and receive an answer in seconds.

Often, the answer sounds intelligent and well structured. It explains concepts clearly and provides logical reasoning.

For a busy executive or operational leader, that can feel like a faster and easier alternative to walking down the hall to the finance team and being confused by jargon.

And that is where the real risk sits.

Not that AI gives wrong answers, but that it gives incomplete ones.

A colleague of mine (let’s call him Jim) had just stepped into a CEO role after this business had been acquired. During his early review of the operation he discovered that roughly 30 percent of the company’s inventory was effectively obsolete.

His first instinct was straightforward: write it off.

He understood that it would impact the profit and loss statement, but as the business had just been acquired the regional CFO mentioned that it might affect something called “goodwill on acquisition.” He wasn’t entirely sure what that meant.

So he did what many leaders now do.

He opened ChatGPT and asked.

The explanation he received was technically correct. The write-off would reduce the value of inventory and could shift the balance between identifiable assets and goodwill arising from the acquisition (probably in someone else’s book). The explanation was clear enough that he felt comfortable proceeding.

From his perspective, the conclusion was simple. The write-off was largely an accounting adjustment between balance sheet items and entities and wouldn’t materially harm the business.

When we later discussed the situation, one additional factor came up that had never been part of the AI conversation.

The company had working capital covenants with its bank.

Writing off that much inventory could reduce current assets and potentially push the business outside the covenant thresholds. That could trigger a renegotiation with the bank or, in the worst case, a breach.

ChatGPT hadn’t mentioned that.

Not because it was wrong, but because it didn’t know the covenant existed. The CEO hadn’t told it.

This is the subtle risk with AI.

It answers the question you ask.

It cannot see the broader context unless you provide it. Which most non finance people will not be thinking about.

And in most organisations, the context is exactly where finance adds value.

The same pattern is already appearing in other decisions.

Take pricing.

A sales leader might ask AI whether dropping price by ten percent would increase revenue through higher volume and gives it some historical data to analyse. AI can provide a reasonable discussion about price elasticity and market dynamics. The answer may sound convincing enough to support the decision.

What it may not fully consider is the organisation’s cost structure.

A ten percent reduction in price might require a thirty percent increase in volume just to maintain the same profit. If the business is already operating near capacity, the decision could reduce margins significantly without generating the expected upside.

Without finance in the conversation, the decision can look commercially sensible but financially damaging.

Inventory decisions provide another example.

Operations teams increasingly use AI tools to optimise ordering quantities and stock levels. The models often focus on supply reliability, lead times and service levels.

What they may not always consider is the impact on cash.

Holding additional inventory may reduce stock-outs, but it can also tie up significant working capital. In an environment where interest rates and financing costs are going up, the financial implications can quickly outweigh the operational benefits.

Or the opposite the costs of a disrupted supply chain might outweigh the costs of holding inventory especially if you are brining stock out of the Straight of Hormuz

Again, AI isn’t necessarily wrong.

It simply answers the question through the lens it has been given.

And people in other functions will use it because its easier, I get an answer now (finance is too busy) and I only pump in context that helps me (blind biases)

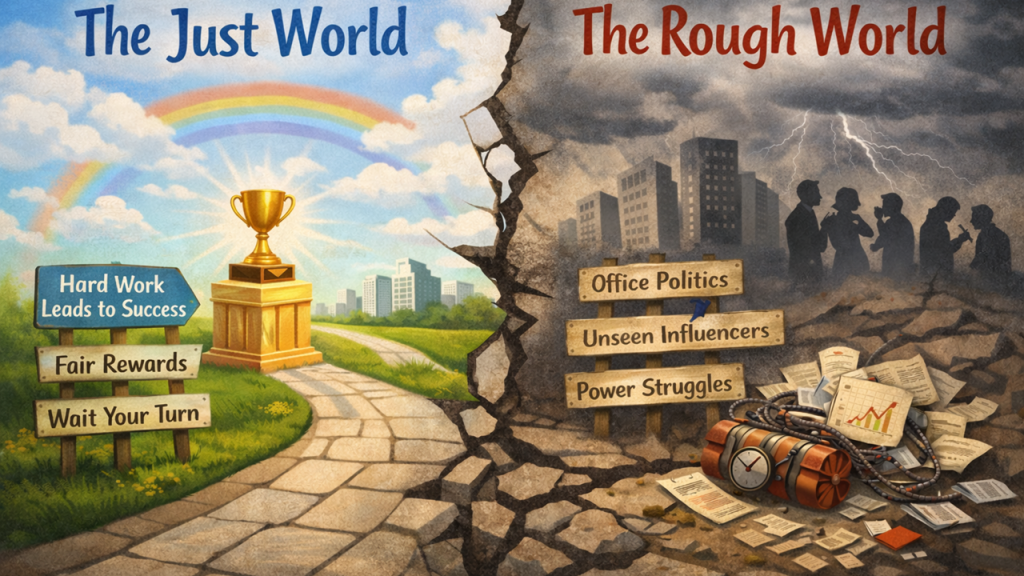

Which brings us to the real risk for finance professionals.

If the business starts using AI to answer the questions they used to bring to you, your relevance is no longer determined by how well you prepare information.

It is determined by how well you explain implications, see context and communicate it.

Finance teams have traditionally been trained to produce accurate reports, detailed analysis and technically correct answers. But AI is becoming increasingly capable of producing those things as well.

What AI struggles with is helping leaders understand what the numbers actually mean for the decisions they are about to make.

That requires context. It requires judgment. And most importantly, it requires the ability to communicate clearly.

If finance cannot explain financial implications in a way that operational leaders understand, those leaders will simply ask AI instead.

Not because they prefer AI.

Because it’s faster.

The finance teams that thrive in the AI era will not necessarily be the ones with the most advanced tools or the most sophisticated dashboards.

They will be the ones that can translate financial outcomes into simple, practical guidance for the rest of the organisation.

What does this decision really mean?

Where are the hidden risks?

What might happen next?

These are the questions leaders still need help answering.

And they are the questions great finance business partners are uniquely positioned to explain.

And if we don’t start doing it well, non finance will work around us – risking decisions that are one dimensional and linear.

Or make a few good decisions early and question if we really need all that resource in finance.